Now, with the recent addition of ChatGPT to Azure OpenAI Service as well as the preview of GPT-4, developers can build their own enterprise-grade conversational apps with state-of-the-art generative AI to solve

Now, with the recent addition of ChatGPT to Azure OpenAI Service as well as the preview of GPT-4, developers can build their own enterprise-grade conversational apps with state-of-the-art generative AI to solve

It’s a convenient user interface built around one specific language model, GPT-3.5, which has received some specialized training. Maybe it’s surprising that ChatGPT can write software, maybe it isn’t; we’ve had

Amazon Web Services provides a host of AI solutions, like facial recognition, detecting online fraud, identifying data anomalies, analyzing images, etc, that are helpful for end-users as well as large enterprises.

While the recently announced new Bing and Microsoft 365 Copilot products are already powered by GPT-4, today’s announcement allows businesses to take advantage of the same underlying advanced models

AIaaS provides out-of-the-box platforms and is easy to set up, making it simple to test out various public cloud platforms, services and machine learning (ML) algorithms . AIaaS platforms enable organizations to build

Deployment resource group – A deployment resource group hosts private Azure DevOps CI/CD agents (virtual machines) needed for the data management zone and a Key Vault for storing any deployment-related

This new wave of developers using Snowflake often requires more flexibility in the underlying compute infrastructure to unlock memory-intensive operations on large data sets such as ML training. With these

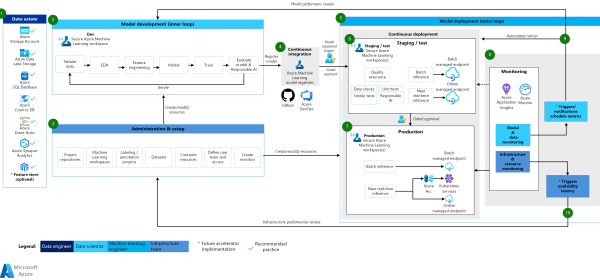

Microsoft Azure offers a myriad of services and capabilities. Building an end-to-end machine learning pipeline from experimentation to deployment often requires bringing together a set of services from across

SageMaker now automatically deploys the new variant in shadow mode and routes a copy of the inference requests to it in real time, all within the same endpoint. Once you complete a shadow test, you can use the

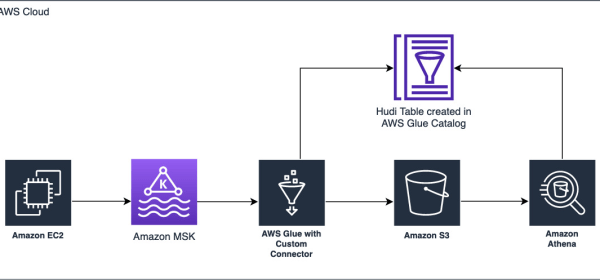

You can create AWS Glue Spark streaming ETL jobs using either Scala or PySpark that run continuously, consuming data from Amazon MSK, Apache Kafka, and Amazon Kinesis Data Streams and writing it to your

As shown in the following diagram, the FSx for Lustre CSI driver plugin is deployed to an Amazon EKS cluster to dynamically provision the FSx for Lustre file system with a given PVC. The Spark application driver and

The pseudonymization service is built using AWS Lambda and Amazon API Gateway . The request response model of the API utilizes Java string arrays to store multiple values in a single variable, as depicted in the

The data and model monitoring and event and action phases of MLOps for NLP are the key differences from classical machine learning. Classical machine learning: Time-Series forecasting, regression, and classification

My co-founder and I started Hunters in 2018 with a mission to revolutionize security operations. We designed the Hunters security operations center (SOC) platform to automatically identify threats, enable