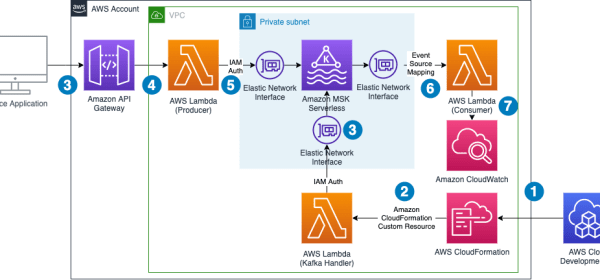

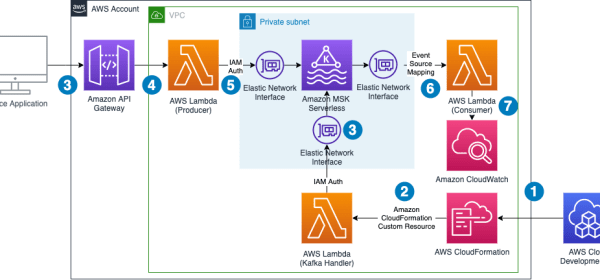

This creates a demo environment, including an MSK Serverless cluster, three Lambda functions, and an API Gateway that consumes the messages from the Kafka topic. API Gateway takes in producer requests

This creates a demo environment, including an MSK Serverless cluster, three Lambda functions, and an API Gateway that consumes the messages from the Kafka topic. API Gateway takes in producer requests

Depending on the size and scale of your workload, you can use Azure Functions as a code-first integration tool to perform text-processing steps, like text summarization on extracted data. Use Azure OpenAI to

Analytics Specialist Solutions Architect focused on big data and analytics and AI/ML with Amazon Web Services…. Raj provided technical expertise and leadership in building data engineering, big data analytics, business

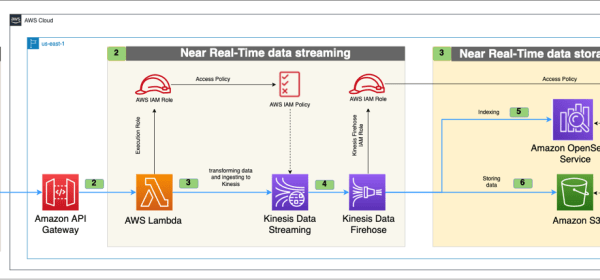

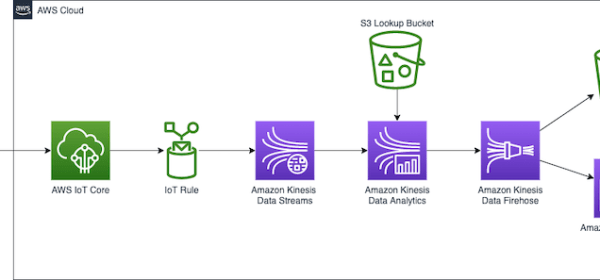

GitHub as the data source, employs Amazon Simple Storage Service (Amazon S3) for scalable storage, manages APIs with Amazon API Gateway, performs serverless computing using AWS Lambda, and facilitates data streaming and ETL (extract, transform, and load) processes through Amazon Kinesis Data Streams and Amazon Kinesis Data Firehose.

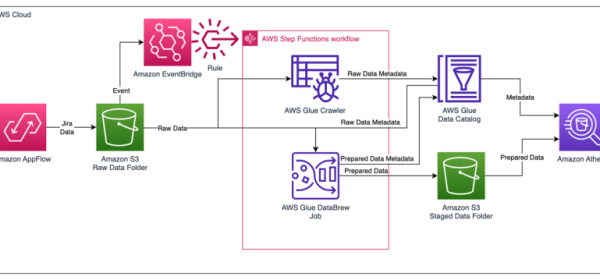

Amazon AppFlow provides software as a service (SaaS) integration with Jira Cloud to load the data into your AWS account. This post shows you how to use Amazon AppFlow and AWS Glue to create a fully automated

About the Authors Saurabh Bhutyani is a Principal Big Data Specialist Solutions Architect at AWS…. Big Data Architect on Amazon Athena.

Apache Flink and Apache Spark are both open-source, distributed data processing frameworks used widely for big data processing and analytics. Spark is known for its

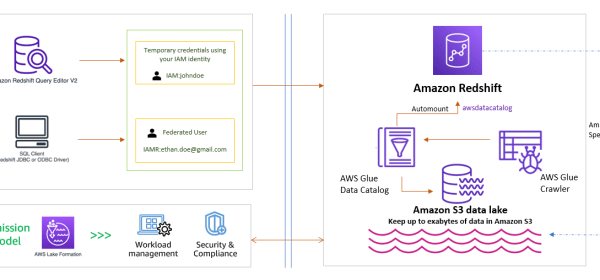

Amazon Redshift is helping tens of thousands of customers manage analytics at scale. Amazon Redshift offers a powerful analytics solution that provides access to insights for users of all skill levels. You can take advantage of the following benefits:

This function reads the DICOM files from Blob Storage and then parses and ingests the metadata into a single flat Azure Data Explorer table. The clinical data pipeline processes the clinical files and ingests the data into the same

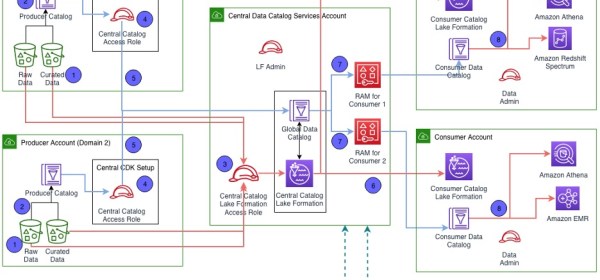

Andrew Long , the lead developer for data mesh, has designed and built many of the big data processing systems that have fueled Amazon’s financial data processing infrastructure.

Noritaka Sekiyama is a Principal Big Data Architect on the AWS Glue team…. He is passionate about architecting fast-growing data environments, diving deep into distributed big data software like Apache Spark, building reusable

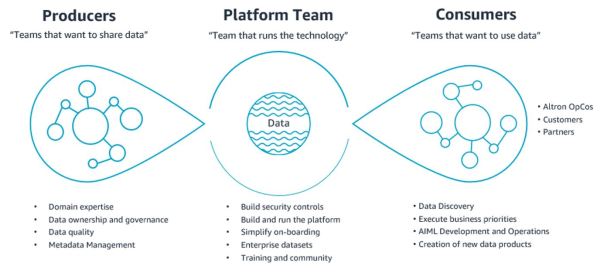

Altron set aside 4 days for their Immersion Day, during which time AWS had assigned a dedicated Solutions Architect to work alongside them to build the following prototype architecture: The workshop helped devise the think big

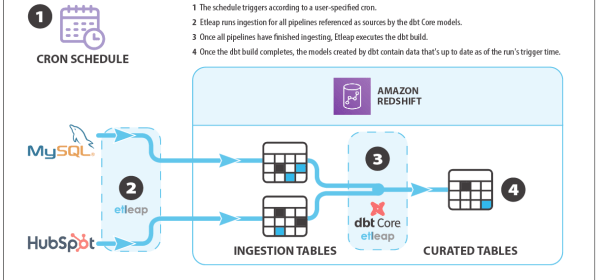

In this post, we explain how data teams can quickly configure low-latency data pipelines that ingest and model data from a variety of sources, using Etleap’s end-to-end pipelines with Amazon Redshift and dbt. End-to-end

To make things simpler, Kinesis Data Analytics Studio is a notebook environment that uses Apache Flink and allows you to query data streams and develop SQL queries or proof of concept workloads before