Understanding Its Role in Data Science and AI

MLOps Hierarchy

The rapid advancement of artificial intelligence (AI) and machine learning technologies has led to an increased focus on MLOps, or Machine Learning Operations. This discipline is now essential for organizations aiming to leverage data science to its fullest potential. By bridging the gap between data science and IT operations, MLOps enables the deployment of machine learning models in production environments, ensuring that they operate efficiently and at scale. In this article, we will explore the core concepts of MLOps, its relationship with data science, and the key components that make up this critical practice, including how MLOps can help streamline operations.

What is MLOps?

MLOps Framework

Definition and Overview of MLOps

MLOps, or Machine Learning Operations, refers to the practice of operationalizing machine learning models within production environments. It integrates machine learning with DevOps principles and data engineering to ensure the reliable deployment and maintenance of machine learning models at scale, leveraging fresh data for improved accuracy. The primary goals of MLOps encompass automating the machine learning lifecycle, monitoring model performance, and fostering collaboration between data scientists and IT teams. By implementing MLOps best practices, organizations can reduce manual intervention and errors, leading to faster and more efficient machine learning projects. Automation of processes such as data collection, model training, testing, and deployment is crucial for scaling machine learning implementations.

Relationship Between MLOps, Data Science, and AI

The role of MLOps is pivotal as it serves as a bridge between data science, which centers on building machine learning models, and IT operations responsible for deploying and maintaining these models. MLOps incorporates DevOps methodologies to tackle unique challenges faced in AI and data science, such as model decay, data drift, and the necessity for continuous retraining. By building upon continuous integration and continuous delivery (CI/CD) pipelines, MLOps automates the machine learning lifecycle, ensuring that models are not only developed but also actively monitored and updated as new data becomes available. This integration enhances collaboration among data science teams, allowing for more effective deployment of machine learning models.

Key Components of MLOps

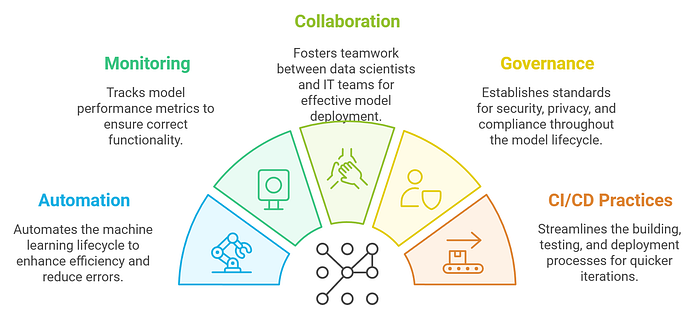

Key components of MLOps encompass various aspects essential for successful machine learning operations. These include data storage and versioning, continuous integration and continuous delivery (CI/CD), model monitoring, and model governance. Data storage solutions must offer versioning capabilities to track changes in datasets and machine learning models. CI/CD practices streamline the building, testing, and deployment processes of machine learning models, enabling quicker iterations and responsiveness to new data sources. Additionally, monitoring solutions play a critical role in tracking performance metrics, ensuring that models function correctly. Meanwhile, governance establishes standards for security, privacy, and compliance throughout the entire model lifecycle, further solidifying the importance of MLOps in AI and data science.

The Importance of MLOps in Data Science

MLOps Integration and Benefits

MLOps as a Bridge Between Data Science and DevOps

MLOps serves as a vital bridge between data science and DevOps, integrating the principles of both to create a seamless workflow for deploying and managing machine learning models, aided by skilled ML engineers. This integration is crucial as it enables data scientists and IT operations teams to collaborate effectively, streamlining the transition from model development to production environment through robust MLOps infrastructure. As organizations increasingly rely on AI to drive decision-making, MLOps ensures that machine learning models are not only deployed efficiently but also maintained effectively. By fostering this collaboration, MLOps reduces the gap between data science and IT, allowing for a more agile response to changing business needs and technological advancements.

Role of MLOps in Machine Learning Projects

The role of MLOps in machine learning projects is indispensable, as it encompasses the entire lifecycle of machine learning models — from data preparation and preprocessing to deployment and ongoing performance monitoring. MLOps ensures that models are built, consistently monitored, and updated as necessary to maintain their effectiveness. By managing tasks such as data collection, training, and continuous integration and continuous delivery (CI/CD), MLOps allows data scientists to focus their efforts on enhancing model performance rather than on infrastructure management. This shift not only leads to more reliable and scalable machine learning solutions but also increases the overall productivity of data science teams, ensuring that they can respond swiftly to the demands of evolving data sources.

Benefits of Implementing MLOps

Implementing MLOps provides a multitude of benefits that significantly enhance data science initiatives, particularly through improved data sets management and analysis. One of the primary advantages is the acceleration of machine learning model deployment, which minimizes the time and complexity involved in moving models into production. Improved collaboration between data scientists and IT teams leads to clearer communication, ensuring that all stakeholders are aligned throughout the machine learning lifecycle. Additionally, MLOps enhances model monitoring capabilities, allowing organizations to maintain high-quality performance over time. By continuously tracking for issues such as data drift and performance degradation, MLOps ensures that machine learning models remain relevant and effective as business requirements evolve, ultimately contributing to the future of MLOps as a critical component in AI and data science strategies.

Implementing MLOps Best Practices

MLOps Implementation

Key Steps to Implement MLOps

To implement MLOps effectively, organizations must first establish a clear MLOps strategy that aligns with their overall business objectives. This involves selecting appropriate tools and technologies that facilitate the seamless integration of machine learning operations into existing workflows. Fostering a culture of collaboration between data scientists and IT teams is crucial, as it encourages knowledge sharing and mitigates the risks associated with deploying machine learning models. Automation should be prioritized across various stages, including model deployment and monitoring, to minimize manual intervention and potential errors, as MLOps can help enhance these processes significantly. Moreover, organizations should commit to continuous learning and improvement, regularly assessing their MLOps practices to adapt to evolving business needs and technological advancements.

MLOps Tools and Technologies

A myriad of tools and technologies have emerged to support MLOps practices, each tailored to enhance specific aspects of the machine learning lifecycle. Prominent tools such as MLflow, Kubeflow, and TensorFlow Extended (TFX) stand out for their capabilities in model tracking, deployment automation, and performance monitoring. Organizations need to evaluate their unique requirements and select the tools that best align with their MLOps strategy, ensuring effective management of machine learning models in production. The successful integration of these MLOps tools into existing infrastructure can dramatically improve the efficiency, reliability, and scalability of machine learning operations, thereby enabling organizations to respond swiftly to new data and changing market conditions.

End-to-End MLOps Workflow

An end-to-end MLOps workflow is essential for managing the entire machine learning lifecycle, encompassing several critical stages such as data collection, preprocessing, model training, deployment, and ongoing monitoring. This structured workflow ensures that all components are addressed systematically, reducing the potential for errors and enhancing collaboration among the data science team. By establishing clear processes, organizations can streamline their operations and maintain high standards in model performance. Continuous monitoring and feedback loops are indispensable, allowing teams to promptly identify and rectify issues that may arise during model deployment and operation, ultimately contributing to the success of machine learning projects and the future of MLOps in AI and data science.

The Role of MLOps Engineers

MLOps Engineer’s Role and Skills

Skills Required for MLOps Engineers

MLOps engineers play a crucial role in the intersection of machine learning, data engineering, and IT operations. To excel in this field, they must possess a diverse skill set that encompasses expertise in machine learning and software engineering, alongside a solid understanding of DevOps practices. Proficiency in programming languages such as Python and R is essential for implementing machine learning models and automating workflows. Familiarity with cloud platforms like AWS, GCP, and Azure enables MLOps engineers to deploy models in scalable production environments. Moreover, knowledge of containerization technologies such as Docker and Kubernetes is vital for managing machine learning operations effectively. A strong grasp of CI/CD pipelines, version control systems, and monitoring tools further enhances their ability to maintain and optimize the performance of machine learning models throughout their lifecycle.

Responsibilities of MLOps Engineers in Projects

The responsibilities of MLOps engineers span the entire machine learning lifecycle, making them indispensable in any ML project, particularly in managing training data and model performance. They oversee the transition from model development to deployment, ensuring that machine learning models are integrated effectively into production environments. This includes setting up and managing CI/CD pipelines to facilitate seamless deployment and updates. MLOps engineers also monitor model performance continuously, addressing any issues that may arise and ensuring that models are retrained as necessary to maintain optimal accuracy. Their collaboration with data scientists and software engineers is essential to ensure that models are built with scalability in mind and can address real-world complexities. By liaising between different teams, MLOps engineers help bridge the gap between data science and IT operations, thus driving successful machine learning initiatives.

Collaboration with Data Scientists and DevOps Teams

Effective collaboration between MLOps engineers, data scientists, and DevOps teams is paramount for the success of machine learning projects, as they work together on data preparation and model deployment. MLOps engineers function as a critical bridge, facilitating communication and fostering teamwork to ensure that machine learning models are developed, deployed, and maintained efficiently, utilizing effective data preparation and model strategies. This collaborative effort allows for the identification and resolution of challenges that may impede project progress, enhancing the overall quality of machine learning initiatives. By streamlining workflows and promoting a culture of continuous improvement, MLOps engineers help integrate best practices within the data science team, ensuring effective data analysis and model training. Ultimately, this collaboration drives innovation and optimizes the deployment of machine learning models, ensuring that organizations can harness the full potential of AI, data science, and effective data analysis.

Future of MLOps

Navigating the Future of MLOps

Trends Shaping the Future of MLOps

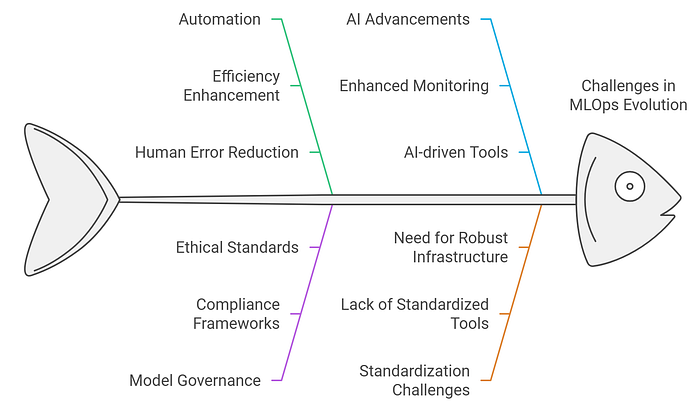

The future of MLOps is being significantly influenced by several emerging trends that are reshaping the landscape of machine learning operations, particularly as MLOps can help organizations better utilize their training data. One of the most notable trends is the increasing automation of machine learning workflows, where organizations are adopting tools and practices that reduce human intervention in repetitive tasks. This focus on automation not only enhances efficiency but also minimizes the risk of human error. Additionally, the growing importance of model governance is driving organizations to establish robust frameworks that ensure compliance with regulations and ethical standards. As the demand for machine learning continues to escalate, MLOps practices will evolve to address the complexities of managing intricate ML systems at scale, ultimately fostering innovation and providing a competitive advantage in the marketplace.

Impact of AI Advancements on MLOps

Advancements in artificial intelligence are profoundly impacting MLOps practices, necessitating adaptations to effectively manage increasingly complex machine learning models. As models become more sophisticated, MLOps must incorporate enhanced monitoring and governance frameworks to maintain model performance and adherence to ethical standards. Furthermore, the integration of AI-driven tools is revolutionizing the way MLOps teams approach tasks such as model training, monitoring, and retraining processes, especially when utilizing fresh data. These innovations streamline workflows and enable organizations to fully capitalize on the capabilities of machine learning technologies, ensuring that they remain competitive and responsive to evolving data sources and market demands.

Challenges Ahead for MLOps Professionals

Despite the promising outlook for MLOps, professionals in this domain face several challenges that can hinder their effectiveness. A significant issue is the lack of standardized practices and tools, which can lead to inconsistencies in the implementation of MLOps across different organizations, highlighting the need for robust MLOps infrastructure. Additionally, the rapid pace of technological advancements necessitates continuous training and upskilling to keep abreast of new tools and methodologies. Managing diverse machine learning models across various environments adds another layer of complexity, as does the ongoing need to ensure data privacy and compliance with regulations. Addressing these challenges will require collaboration and a commitment to innovation within the MLOps community, ensuring that professionals are equipped to navigate the evolving landscape of machine learning operations.

Enjoyed this article? Sign up for our newsletter to receive regular insights and stay connected.