What is striking about Suleyman’s heavily promoted book is how the optimism of his will is overwhelmed by the pessimism of his intellect, to borrow a phrase from the Marxist philosopher

Soon you will live surrounded by AIs. They will organise your life, operate your business, and run core government services. You will live in a world of DNA printers and quantum computers, engineered pathogens and autonomous weapons, robot assistants and abundant energy.

None of us are prepared.

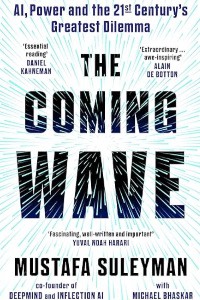

As co-founder of the pioneering AI company DeepMind, part of Google, Mustafa Suleyman has been at the centre of this revolution. The coming decade, he argues, will be defined by this wave of powerful, fast-proliferating new technologies.

In The Coming Wave, Suleyman shows how these forces will create immense prosperity but also threaten the nation-state, the foundation of global order. As our fragile governments sleepwalk into disaster, we face an existential dilemma: unprecedented harms on one side and the threat of overbearing surveillance on the other.

Can we forge a narrow path between catastrophe and dystopia?

This ground-breaking book from the ultimate AI insider establishes ‘the containment problem’ – the task of maintaining control over powerful technologies – as the essential challenge of our age.

Where Suleyman’s book is most valuable is in the concluding section, in which he outlines 10 steps towards possible containment. His suggestions would provide a useful primer for any official attending the British government’s forthcoming conference on AI governance at Bletchley Park, even if they marginalise some of the issues flagged by ethics researchers.

Some of Suleyman’s recommendations are technical, sensible and readily implementable. At present, fewer than 1 per cent of the world’s 30,000 plus AI researchers work on safety issues. Tech companies and universities should indeed invest more in this area, as Suleyman urges them to do. It would also help if greater efforts were made to scrub the data sets used to train AI models for inherent societal biases and focus more on the explainability and corrigibility (the ability to correct errors) of these models. If possible, it would make sense to build “bulletproof off switches” into synthetic biology, robotics and AI systems.

The industry would also benefit from external, expert auditors. Profit-seeking companies, who are driving the technology, might also take a broader view of their societal responsibilities if they were to reincorporate as “global interest companies” as, intriguingly, he notes DeepMind once considered.

Governments could play an important role to play by restricting access to the leading-edge chips that power state-of-the-art AI models and banning open-source AI models outright for fear they may be abused by bad actors. Unlike many US-based technologists, Suleyman welcomes the EU’s forthcoming AI Act, flawed as it is, for having the right focus and ambition in classifying risks to users. International treaties will be needed to write new rules of war. Civil society will be instrumental in holding the tech companies to account and shaping new norms for gene editing.

There is no shortage of activity in some of these areas. The OECD’s policy observatory counts no fewer than 800 AI policies across 60 countries in its database. But Suleyman is right to stress the importance of co-ordination and coherence. And that revolves around human agency and the messy art of politics.

Purchase this book in Amazon store Note: The link is an Amazon Associate affiliate link. If you make a purchase through this link, I may earn a small commission at no additional cost to you. This helps support the content I create for you. Thank you for your support!

Enjoyed this article? Sign up for our newsletter to receive regular insights and stay connected.