Microservices structure an application as a set of independently deployable services. They speed up software development and allow architects to quickly update systems to adhere to changing business requirements.

According to best practices, the different services should be loosely coupled, organized around business capabilities, independently deployable, and owned by a single team. If applied correctly, there are multiple advantages to using microservices. However, working with microservices can also bring challenges. In this edition of Let’s Architect!, we explore the advantages, mental models, and challenges deriving from microservices with containers.

Application integration patterns for microservices

As Tim Bray said in his time with AWS, “If your application is cloud native, large scale, or distributed, and doesn’t include a messaging component, that’s probably a bug.”

This video evaluates several design patterns based on messaging and shows you how to implement them in your workloads to achieve the full capabilities of microservices. You’ll learn some fundamental application integration patterns and some of the benefits that asynchronous messaging can have over REST APIs for communication between microservices.

The scatter-gather pattern scales parallel processing across nodes and aggregates the results in a queue

Distributed monitoring

Customers often cite monitoring as one of the main challenges while working with containers. Monitoring collects operational data as logs, metrics, events, and traces to identify and respond to issues quickly and minimize disruptions.

This whitepaper covers cross-service challenges in microservices, including service discovery, distributed monitoring, and auditing. You’ll learn about the role of DNS and service meshes in interservice communication and discovery and the tools available for monitoring your clusters that run containers and for logging.

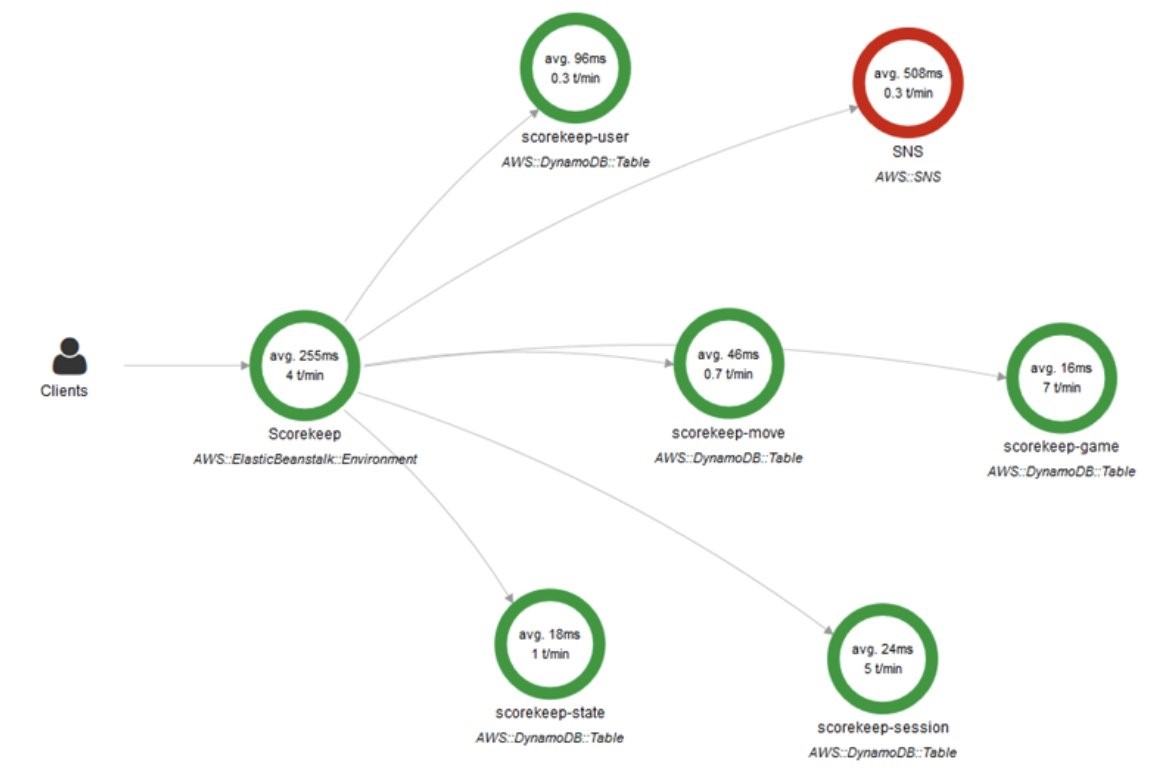

This view from AWS X-Ray shows how a request can be tracked across different services. This is implemented by taking advantage of correlation IDs

Create a pipeline with canary deployments for Amazon ECS using AWS App Mesh

When architects deploy a new version of an application, they want to test it on a set of users before routing all the traffic to the new version. This is known as a “canary deployment.” A canary deployment can automatically switch traffic back to the old version if some inconsistencies are detected. This decreases the impact of the bug(s) introduced in the new release. For microservices, this is helpful when testing a complex distributed system because you can send a percentage of traffic to newer versions in a controlled manner.

A service mesh provides application-level networking so your services can communicate with each other across multiple types of compute infrastructure. This blog post shows how to use AWS App Mesh to implement a canary deployment strategy using AWS Step Functions for orchestrating the different steps during testing and AWS Code Pipeline for continuous delivery of each microservice.

An overview of the architecture used to create the pipeline and perform the canary deployments

Running microservices in Amazon EKS with AWS App Mesh and Kong

Distributed architectures bring up several questions. How do we expose our APIs towards client-side applications? How do our microservices communicate?

This blog post answers these questions with a solution that uses Amazon Elastic Kubernetes Service (Amazon EKS) in conjunction with AWS App Mesh. This solution helps you manage the security and discoverability of microservices, and Kong protects your service mesh and runs side by side with your application services.

The Kong for Kubernetes architecture can be implemented using Amazon EKS and AWS App Mesh

See you next time!

See you in a couple of weeks when we discuss open source technologies on AWS!

Looking for more architecture content? AWS Architecture Center provides reference architecture diagrams, vetted architecture solutions, Well-Architected best practices, patterns, icons, and more!

Other posts in this series

Enjoyed this article? Sign up for our newsletter to receive regular insights and stay connected.